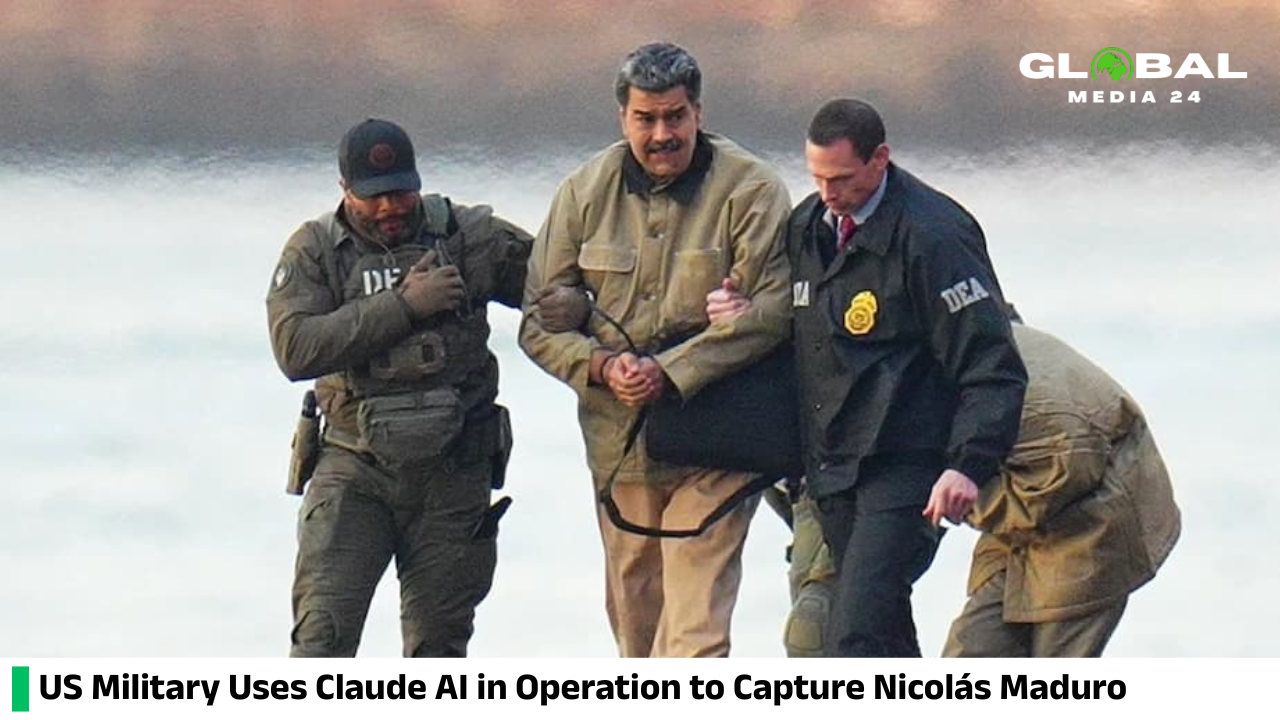

US military reportedly deployed the AI tool Claude in its operation to apprehend Nicolás Maduro

Being adopted by the military is seen as a significant credibility enhancer for AI firms, strengthening their legitimacy and supporting the lofty valuations driven by investors in an intensely competitive market.

According to a report in the Wall Street Journal, the US military used artificial intelligence in its operation to capture Venezuelan President Nicolas Maduro last month.

Anthropic was the first AI model developer to be used for classified operations at the Pentagon.

Military adoption is considered a major credibility boost for AI companies, as it gives them legitimacy and justifies their high investor-driven valuations in a fiercely competitive environment.

Despite Anthropic’s usage guidelines, which forbid the cloud from inciting violence, weaponizing, or conducting surveillance, artificial intelligence tools were used in the operation.

Maduro and his wife were captured in Caracas after bombings at several locations.

An Anthropic spokesperson said, “Any use of the cloud—whether in the private sector or government—must comply with our usage policies, which govern how the cloud can be deployed. We work closely with our partners to ensure compliance.”

The cloud was deployed because Palantir Technologies, a data company whose tools the Pentagon uses, has a partnership with Anthropic.

According to a Wall Street Journal report, US officials are considering canceling contracts worth up to $200 million due to Anthropic’s concerns about the use of the cloud.

Anthropic’s Chief Executive, Dario Amodei, has called for greater regulation and protections to prevent harm from AI and has publicly expressed concerns about the use of AI in autonomous lethal operations and domestic surveillance, two major obstacles to its current contract with the Pentagon.

According to the Journal report, Defense Secretary Pete Hegseth announced in January that the Pentagon would not work with AI models that “won’t allow you to fight the war,” citing discussions with Anthropic.

Anthropic signed a $200 million contract last summer.

Several AI companies are developing custom tools for the US military, most of which are available only on unclassified networks typically used for military administration.

Anthropic is the only company available in classified settings through a third party, but the government is still bound by the company’s usage policies.